-

Further thoughts on Forth (and Riscyforth in particular) and Life

It’s now two years since I began my Riscyforth project of writing a Forth for RISC-V single board computers. But it wasn’t until yesterday that I wrote my first serious/useful (if you like this sort of thing) program for it, a version of Conway’s Game of Life. (This program wasn’t as long as the unit…

-

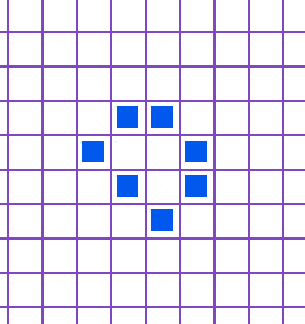

Game of Life animation

I’ve made some improvements to the program and so here’s an animation of the output.

-

Conway’s Game of Life, in Forth

Forty-one years after I wrote my first version of this (in Z80 machine code for the ZX80/ZX81) here’s the latest, in Forth (Riscyforth for a RISC-V SBC). This is hot off the editor, so can no doubt be optimised, but I am pretty pleased with it.

-

Write-only? A simple program translated from C to Forth

The old joke is that Forth is a “write-only language”. in other words, you can write this stuff but you cannot understand it (and hence maintain it). Of course, any endorsement of this view is regarded as a heresy amongst many in the Forth community, but I have to admit that sometimes the Forth-bashers have…

-

Does “coding” have a future?

Today is, for me, the last working day of the year and I was able to finish with a small triumph – successfully solving several programming conundrums that have eaten into my time over a number of weeks. The technology involved – Python – is not one I have had much experience with, and only…

-

Evidence suggests vaccination does cut spread of Omicron

Earlier this week I noticed that some anti-vax propagandists have a new line of attack – they aren’t denying that vaccination protects you against severe illness from Covid, but they are saying that it is false to claim that vaccination limits the spread of the disease and hence its also wrong to insist that people…

-

New release of Riscyforth

There is a new release (0.7) of Riscyforth – my Forth for RISC-V based single board computers available – Riscyforth 0.7. This release adds significant support for 64-bit IEEE754 floating point numbers to broadly match the 2012 Forth standard. The implementation of the Forth floating point word set isn’t yet quite complete and Riscyforth does…

-

The unreasonable ineffectiveness of arithmetic with floating point numbers

Eugene Wigner’s wrote a famous short essay “The Unreasonable Effectiveness of Mathematics in the Natural Sciences” in 1960. To be honest I find it slightly underwhelming: to me mathematics is the abstraction from physical reality and not – as might be implied by Wigner – that our physical universe is a concretised subset of a…

-

Floating point, finally

What is “floating point”? How do you represent a number like in a compressed and useful way in binary? Any computer science student will be able to tell you that you use a “floating point” representation and, like me a couple of months ago, could probably move on to a hand-wavy explanation that you stored…

-

Trusting the world of floating point

A lot of the everyday calculations we rely on depend on “floating point arithmetic” – in other words an approximation of accurrate mathematics and not actual accurate mathematics. For the last six weeks or so I have been working on bring floating point arithmetic to “Riscyforth” – my assembly-based implementation of Forth for Risc-V “single…

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.